Perceptron

Perceptron

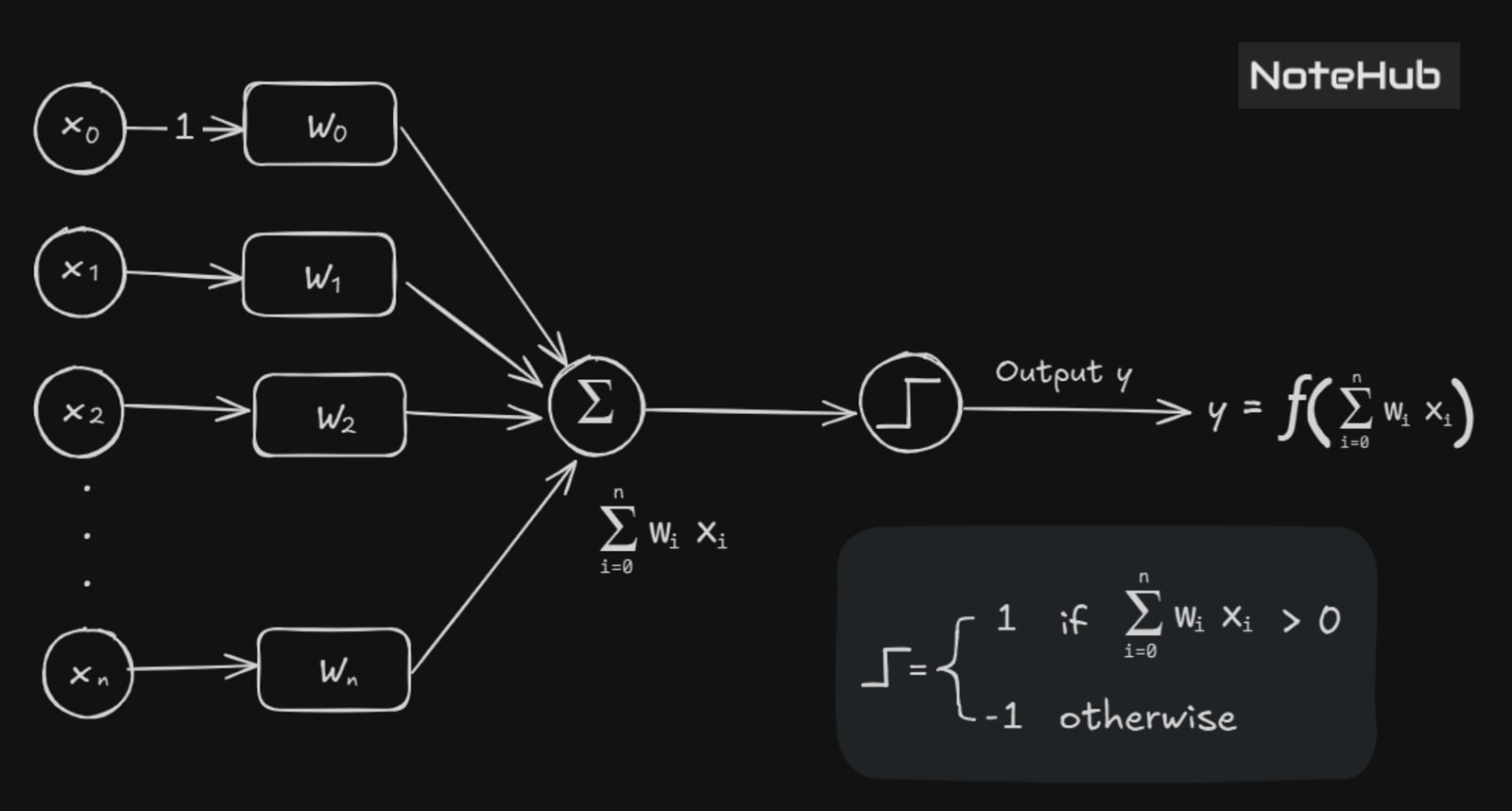

Think of the Perceptron as a simple decision-maker—it looks at inputs, weighs their importance, and makes a yes-or-no judgment. It was one of the first steps toward building intelligent systems and still plays a crucial role in understanding how neural networks work today. By learning how to draw a boundary between different classes of data, the perceptron forms the foundation for more advanced models used in real-world applications.

Definition:

A Perceptron is an artificial neuron in which the activation function is a threshold function.

Terminology:

= input signals

= associated weights

= Bias input (typically set to 1).

= Bias weight (adjusts activation threshold).

= Weighted sum of inputs.

= Activation function (determines output).

= Final output signal.

The neuron is called as perceptron if the output of the neuron is given by the following functions

This is a step function — if the weighted sum exceeds the threshold, the neuron "fires" (outputs 1), else it outputs 0.

Perceptron Learning Algorithm

In the algorithm, we use the following notations

Symbol | Description |

|---|---|

Number of input variables | |

output for input vector | |

The | |

Desired output for input | |

Value of | |

Bias input (always 1) | |

Weight for the | |

Weight for the |

Algorithm Steps

Step 1: Initialization

Initialize the weights:

Can be initialized to 0 or small random values.

Also, initialize a threshold (bias term).

Step 2: Training (for each training sample)

For each example in the training set .

perform the following step over the input and desired output

Compute the output

This is the predicted output of the perceptron after processing input using the current weights at iteration . The perceptron computes this using:

Update weights for each using:

Step 3: Repeat Until Convergence

Repeat Step 2 until either:

The average error per iteration is less than a predefined threshold:

OR

A maximum number of iterations is reached.