(RNN) Recurrent neural network

Recurrent Neural Network (RNN)

A Recurrent Neural Network (RNN) is a type of neural network designed to handle sequential data. Unlike traditional feed-forward networks, RNNs use connections that form cycles, allowing them to maintain a "memory" of previous inputs. This makes them suitable for tasks where the current output depends on previous steps (e.g., predicting the next word in a sentence).

How RNN Works

Input vector is passed into the hidden layer at each time step .

The hidden state is calculated using both the current input and the previous hidden state .

The output is generated from the hidden state.

At the final time step, the last hidden state can be used to calculate the overall output.

Errors are backpropagated through time (BPTT – Backpropagation Through Time) to update the weights.

Why RNN is Needed

Feed-forward networks cannot handle sequential data as they only consider the current input.

They cannot memorize past inputs/outputs.

RNNs solve this by retaining information through hidden states, making them effective for sequential tasks like speech, text, and time-series prediction.

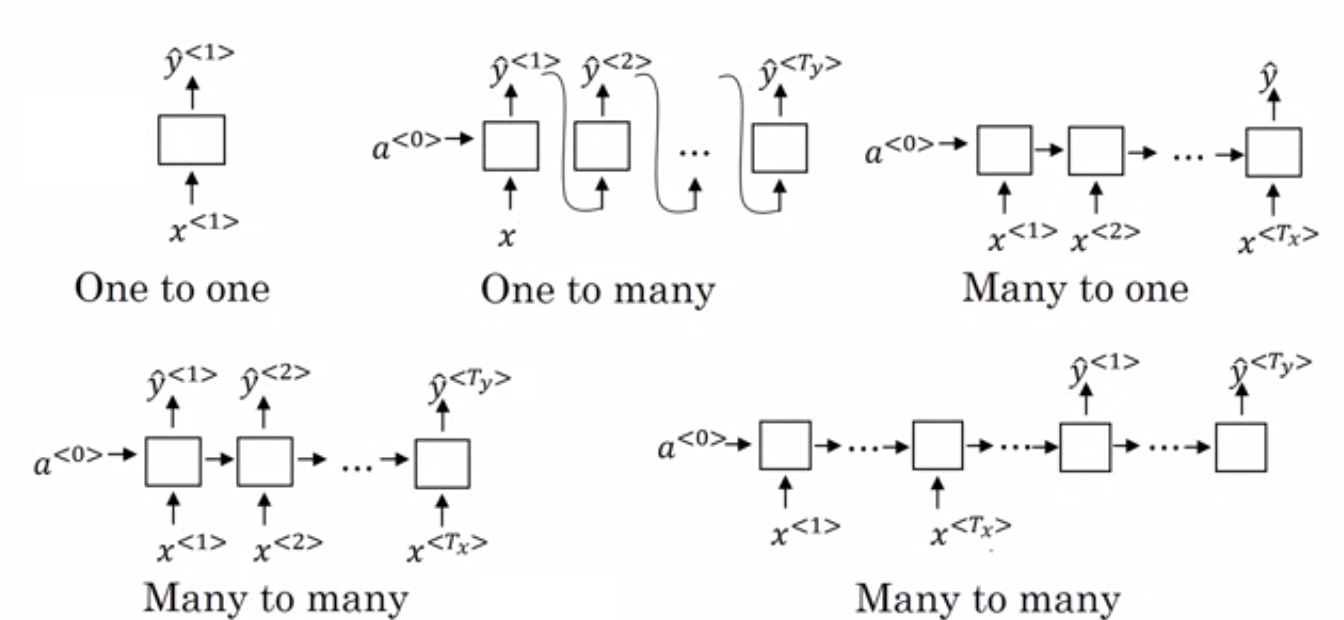

Types of RNN

One-to-One → Simple input → output mapping (e.g., image classification).

One-to-Many → Single input, multiple outputs (e.g., image captioning).

Many-to-One → Multiple inputs, single output (e.g., sentiment analysis).

Many-to-Many → Multiple inputs and outputs (e.g., machine translation, video processing).

Advantages of RNN

Sequential memory: Retains information from previous inputs.

Time-series prediction: Past data helps predict future values.

Combination with CNNs: Can be used with convolutional layers to capture spatial + sequential features (useful in video and image tasks).

Limitations of RNN

Vanishing gradient problem: Gradients shrink during backpropagation, making it hard to learn long-term dependencies.

Exploding gradient problem: Gradients grow too large, causing unstable training.

Slow training: Sequential nature limits parallelization compared to CNNs/Transformers.

Applications of RNN

Speech recognition

Time-series prediction

Natural Language Processing (NLP):

Language modeling

Sentiment analysis

Machine translation

Image & video processing